This is a rather huge geekout, so if you’re not into drive performance on various machines, feel free to ignore this post. =) It’s original goal was to look at the performance difference between a 2009 Mac Mini, 2009 Mac Mini Server, and 2010 Mac Mini Server. It then grew to be “Let’s compare all the storage I have in my rack”.

In this, I’m going to be comparing the results from 5 different machines. They are:

- Early 2009 Mac Mini (4GB RAM), single 320GB 5400 RPM Drive (Model: Fujitsu MHZ2120BH G1)

- Late 2009 Mac Mini Server (4GB RAM), dual 500GB 5400 RPM Drives (Model: Hitachi HTS545050B9SA02) (RAID1)

- Xserve 2009 (12GB RAM), dual 160GB 7200 RPM Drives (Model: WDC WD1602ABJS-43P5A0) (RAID1), and single 160GB 7200 RPM Drive (Model: WDC WD1602ABJS-43P5A0)

- Mid-2010 Mac Mini Server (4GB RAM), dual 500GB 7200 RPM Drives (Model: Hitachi HTS725050A9A362) (In both single, and RAID1 configuration)

- And for fun, Xserve 2009 (12GB RAM), Xsan 2.2.1 on 3 LUN (6 drive RAID5) Xserve RAID Data, single LUN (2 Drive RAID1) Xserve RAID Metadata.

- For extra fun, Dell 2850 (5GB RAM), 6 72GB 15,000 RPM Drives (Model: Fujitsu MAX3073NC) in RAID10 (3 RAID1’s striped together).

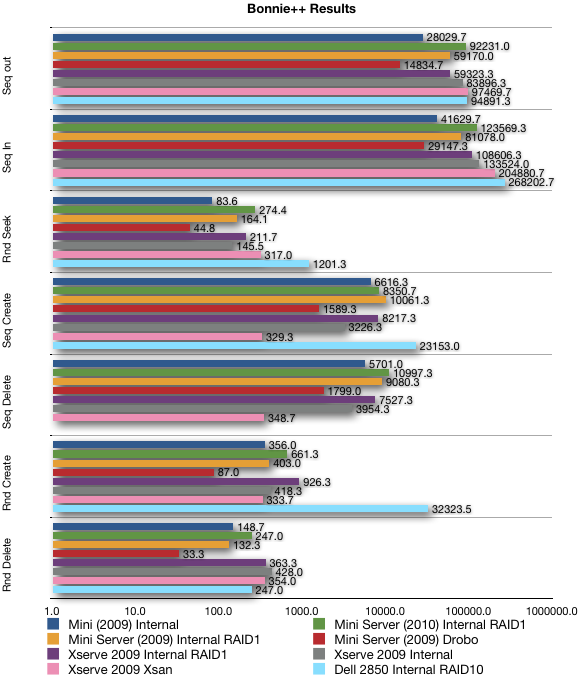

All stats were created with bonnie++ 1.03d (1.03e will not build on 10.6.5 due to lack of support for O_DIRECT). All tests will include the bonnie++ parameters, as well as the results. The purpose of these tests is largely to see how various setups compare, and was largely prompted because of Splunk performance on various drive configurations. Splunk is largely reliant on Disk IO (IOPS mainly). Sadly I have no ability to test SSD performance. Perhaps at some point in the future. Please note, the graph below, is in logarithmic scale, so check out the labels for each bar, rather than looking at relative size (unless you think logarithmically).

What this really says, other than the Dell kicked everything else’s ass (as you’d expect with a RAID10 setup of 15k RPM drives (which is also why there is no result for Seq Delete on the 2850, because it was just too fast)), and that the Drobo is pretty slow in comparison, is that going from 5400 RPM drives in the Mac Mini Server 2009, to 7200 RPM drives in the Mac Mini Server 2010 results in a 67% improvement in performance (specifically IOPs (I/O Per Second), represented by the “random seek” value), which seems rather high for the change between 5400rpm and 7200rpm (the change should only be about 33% (given the 33% faster rotation speed of 7200rpm vs. 5400rpm). But, given it’s 2 drives, maybe that accounts for the doubling in performance? I’m not sure I can say one way or the other. And going from the previous Splunk machine (the early 2009 Mac Mini with a single 5400rpm drive, to the new 2010 Mac Mini Server with two 7200rpm drives gave me a IOP increase of 228%!! Which explains why I see results MUCH faster now than I used to.

Of other interest, albeit tangentially, is that the performance on my Drobo, and my Mac Mini Server at home, are both lower than when I originally purchased them. Which, isn’t terribly unusual since the free space on both is probably heavily fragmented, which would impact the results of bonnie++. Also, I’m not sure how the Drobo performance changes as more drives are added (I started off with 2 drives in it, between the original results and now, I’ve added another drive).

Here are the actual results in table form:

| Results | Seq out | Seq In | Rnd Seek | Seq Create | Seq Delete | Rnd Create | Rnd Delete |

|---|---|---|---|---|---|---|---|

| Mini 2009 Internal | 28029.7 | 41629.7 | 83.6 | 6616.3 | 5701.0 | 356.0 | 148.7 |

| Mini Server 2010 RAID1 | 92231.0 | 123569.3 | 274.4 | 8350.7 | 10997.3 | 661.3 | 247.0 |

| Mini Server 2009 RAID1 | 59170.0 | 81078.0 | 164.1 | 10061.3 | 9080.3 | 403.0 | 132.3 |

| Mini Server 2009 Drobo | 14834.7 | 29147.3 | 44.8 | 1589.3 | 1799.0 | 87.0 | 33.3 |

| Xserve 2009 RAID1 | 59323.3 | 108606.3 | 211.7 | 8217.3 | 7527.3 | 926.3 | 363.3 |

| Xserve 2009 Internal | 83896.3 | 133524.0 | 145.5 | 3226.3 | 3954.3 | 418.3 | 428.0 |

| Xserve 2009 Xsan | 97469.7 | 204880.7 | 317.0 | 329.3 | 348.7 | 333.7 | 354.0 |

| Dell 2850 RAID10 | 94891.3 | 268202.7 | 1201.3 | 23153.0 | 32323.5 | 247.0 |

Performance is fun. Splunk is kickass, and running it on hardware that actually can handle the I/O for a small shop like us (about 150-250MB/day of indexed data) makes it much more appealing to use. Now if only they’d bring back 64-bit support for 10.6, I’d be a happy camper (64-bit support gives better performance largely because the “buckets” of data can be stored in fewer files due, I think, to the ability for indexes to be larger, and files to be larger. Bigger singular indexes give better search performance than it being broken up into several smaller ones).